AI Expert Warns of Human Extinction

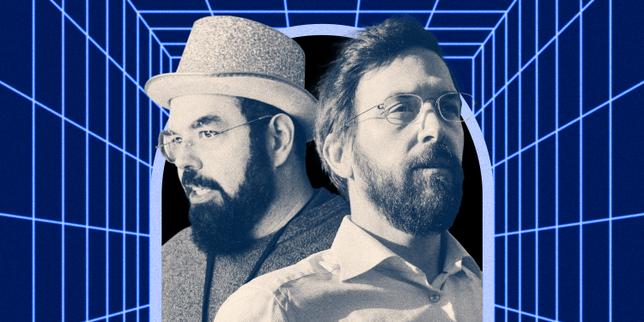

Eliezer Yudkowsky and Nate Soares warn about the risk of human extinction from superintelligent AI. They believe a powerful AI could cause humanity's destruction. This raises concerns about the future of artificial intelligence.

Nate Soares, previously working at Google, has expressed worries about AI becoming smarter than humans. He thinks this could lead to humanity’s disappearance. The two authors, known as the "AI Apocalypse Cavaliers," are writing a book about this danger. Their work focuses on the potential risks of creating superintelligent AI. This situation is causing people to think about how AI will change the world.

Summarized from the sources above. Read the originals for the full story.

Highlights

Yudkowsky and Soares Warn

Eliezer Yudkowsky and Nate Soares fear AI extinction.

AI Threat Assessment

Nate Soares warns of human extinction due to AI.

Google Employee's Concern

Nate Soares, formerly at Google, highlights the risk.

Superintelligence Risk

The danger is AI surpassing human intelligence.

Impact on Society

AI development raises concerns about the future.